AI is Changing Cybersecurity – For Better and Worse

In the grand theatre of 2023, AI has seized centre stage, casting a transformative spotlight across all industries. OpenAI’s ChatGPT phenomenon is causing quite a stir, prompting a storm of excitement in every corner of the business world. The cybersecurity landscape, far from being an exception, is at the heart of this revolution.

AI has played a significant role in cybersecurity for a while. Machine learning and AI are incorporated into many threat detection and automated alert triage systems, for example. But the power and capability of AI in cybersecurity is expanding very quickly. Any technology can be used for good or ill – so AI joins the arsenal of tools available to malicious actors. Therefore, “good” AI must become a central and critical part of the cybersecurity landscape.

We, as cybersecurity PR and marketing specialists, have a unique vantage point to help shape the unfolding AI narrative. So, let’s delve into the prominent AI trends in cybersecurity that will dominate the scene in 2023 and beyond.

Prominent trends of AI in cybersecurity

AI is becoming a fundamental part of security product development

One observation of the past few months was that AI is not just a trend for the ‘good guys’. It’s become an even bigger attraction for the dark side – the malicious threat actors. Security leaders today constantly emphasise the malicious use cases of publicly available AI tools like ChatGPT. These tools can potentially allow even the most novice criminal to carry out sophisticated cyberattacks.

Mikko Hyppönen, the Chief Research Officer of WithSecure has recently shared insights in Help Net Security on how AI-driven automated malware campaigns are drastically changing the threat landscape. “Large language models (LLMs) like GPT, LAMDA and LLaMA are not only able to create content in human languages, but in all programming languages, too. We have just seen the first example of a self-replicating piece of code that can use large language models to create endless variations of itself. So, the only thing that can stop a bad AI is a good AI.” – says Hyppönen.

In response to this growing concern, the industry will continue to see more AI security products being developed.

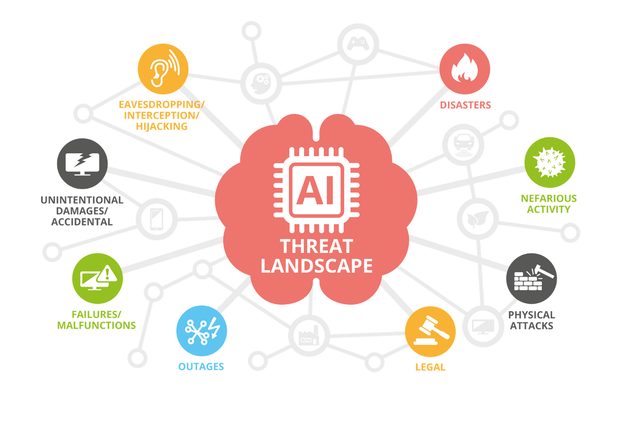

Artificial Intelligence threat landscape outlined by ENISA

Behavioural AI

Another emerging trend is the fusion of supervised algorithms and unsupervised learning. These techniques enhance the detection of abnormal behaviour, reducing or restricting access in such situations. Supervised algorithms identify and learn from patterns within existing data, enabling them to evaluate and score the risk of unfamiliar files, such as zero-day threats. Unsupervised learning, on the other hand, uncovers anomalies and relationships within unlabeled data sets. This creates threat predictions – a task near-impossible for human analysts.

The critical importance and use case of behavioural AI in current-gen security products is emphasized by cybersecurity leaders like Mike Britton, the CISO of Abnormal Security. According to his recent article in Teiss, “Bringing AI to email security means that businesses are able to protect their environments fully. Understanding what normal looks like requires a combination of API integration and AI-powered analytics. AI analytics examine the data to identify each employee’s expected behavioural patterns. This includes their usual sign-in times, locations, and preferred browser and device choices. These are some of the most prominent points in catching an imposter.”

AI and machine learning will play an even larger role in securing the entire customer experience, from account creation and login to service interaction. They will continue refining the process of assigning risk scores to login attempts, adapting responses to suspicious events based on their nature and context, rather than simply locking out a user or terminating a session.

Artificial Intelligence in Endpoint Security

AI and machine learning are also making their way to the ‘edge’. In line with edge computing, which brings computational resources closer to remote devices, Artificial Intelligence and machine learning models will be embedded directly on endpoints. With shared information capabilities, these models enable faster identification and neutralisation of threats in real-time.

Microsoft’s Vasu Jakkal, corporate vice president for Microsoft Security, Compliance, Identity and Privacy, recently featured in Venture Beat and emphasized the importance of AI and ML in endpoint security. “AI is incredibly, incredibly effective in processing large amounts of data and classifying this data to determine what is good and what’s bad. At Microsoft, we process 24 trillion signals every single day, and that’s across identities and endpoints and devices and collaboration tools and much more. Without AI, we could not tackle this.”, says Jakkal. So, we are likely to see more AI-powered endpoint security products spur into development.

AI has hit peak buzz – beware of the backlash

With artificial intelligence at the centre of all tech discussions and developments, a lot of exciting things are happening. During the RSA Conference 2023, dozens of sessions explored the convergence of AI and cybersecurity, reflecting its growing importance to tackle cyber threats. Some vendors showcased the AI-based capabilities of their solutions. Others demonstrated their capabilities against malicious cases of artificial intelligence.

However, while there is certainly innovation, the danger is that a lot of so-called “AI” might not be as cutting-edge as it sounds.

So, beware of the backlash commentary. In the coming months, we can easily imagine headlines:

- “Companies are AI-washing their tech stack to mask greater deficiencies”.

- “No, your product is not AI-driven; it is just automated”.

- “Do not confuse the real power of large language models with another legacy tech that has been labelled as AI.”

Cybersecurity leaders must not get carried away with their marketing initiatives and product messaging. AI messaging must stay true: differentiate and attract the audience, but also provide a clear understanding and explain reality.

For more cybersecurity stories, insights and analysis, follow Code Red on Twitter and LinkedIn.