Google’s $100 Billion Mistake: Can We Trust Artificial Intelligence?

Earlier this week, Google announced its Bard AI chatbot to rival OpenAI’s ‘viral’ ChatGPT. The revolutionary accuracy, natural language processing capabilities, and deep learning functions of ChatGPT have caused massive worries for the tech giants, namely Google and Microsoft.

Why? Because users are starting to consider ChatGPT as an alternative to general search engines. Its human-like responsiveness and in-depth ability to understand and respond to diverse prompts surely make the AI chatbot a perfect next-gen information library.

So, hoping to cash in on this chatbot AI trend, Google has expedited its development of Bard, sharing its first glimpse on Monday, February 6th. But, all did not go as planned.

What went wrong with Google Bard AI?

As mentioned by the tech giants, Bard will revolutionise how people search the web. It is will provide more detailed and conversational responses to queries, instead of a list of websites and links.

To showcase this, the company released a short advert, where the Bard chatbot is asked:

“What new discoveries from the James Webb Space Telescope (JWST) can I tell my nine-year-old about?”

Bard is an experimental conversational AI service, powered by LaMDA. Built using our large language models and drawing on information from the web, it’s a launchpad for curiosity and can help simplify complex topics → https://t.co/fSp531xKy3 pic.twitter.com/JecHXVmt8l

— Google (@Google) February 6, 2023

The AI chatbot provided a detailed response to the question, one of which mentioned that JWST took the very first image of a planet outside the solar system. However, this info was inaccurate. As later twitted by NASA professionals, Gael Chauvin and his fellow researchers actually developed the first image with LT/NACO using adaptive optics.

This amusing and rather serious mistake has created concerns for Google AI’s capabilities and plummeted its shares. The search engines parent company Alphabet saw its shares go down by 7%, taking off $100 billion from its market.

AI chatbots under scrutiny: Can we blindly trust AI?

The answer is no; we cannot trust AI chatbots blindly, at least today. Undoubtedly we have seen unprecedented progression and innovation with Artificial Intelligence and Deep Learning in the past few years. In the cybersecurity industry, AI has come a long way in terms of understanding user behaviour and identifying malicious elements.

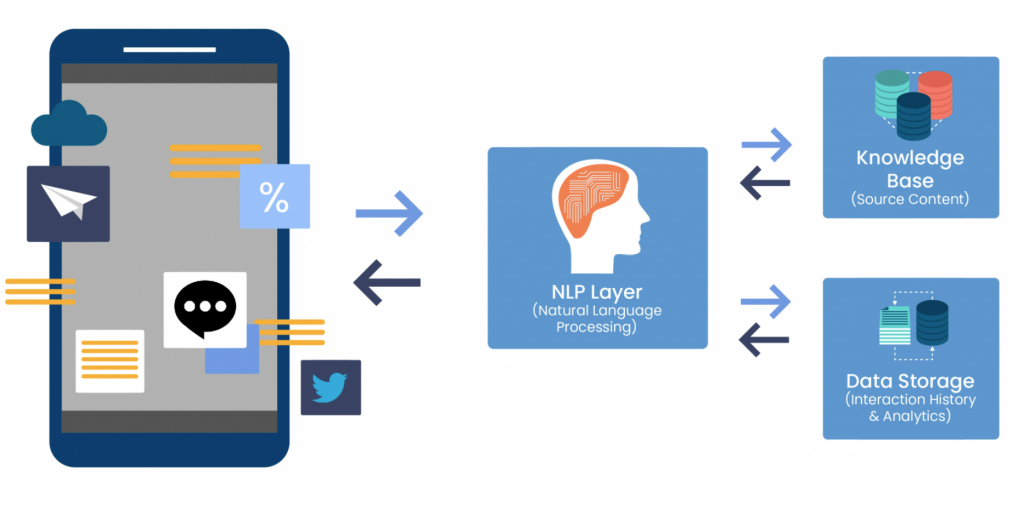

However, when it comes to real-time information processing and providing human-like responses to complex prompts, AI needs continuous ‘human inspection’. This is because the information needed for these chatbots is ultimately sourced from existing repositories or data libraries. Some of this information is very natural to be inaccurate or partially accurate.

Without continuous vetting and scrutiny, the accuracy of these AI solutions cannot be guaranteed. This is in fact, what has happened in the case of Google’s AI chatbot. With the increasing popularity of ChatGPT and Microsoft also announcing its Bing Chatbot AI, Google certainly felt the pressure to expedite Bard’s release.

It is important to understand that these Artificial Intelligence modules source the information through unsupervised learning – an automated machine learning training process. Without prolonged monitoring, assessment, and quality checks, some prompts will ultimately return inaccurate or even biased responses.

So, Google’s rush to enter and disrupt the market might not have been a smart move. Bard AI’s release date is currently unknown, but it was originally some time this month.

There’s also the concern that if more time and due diligence aren’t put towards developing these technologies, it will create potential security risks. We have recently seen research on how cybercriminals can potentially use ChatGPT to launch more calculated phishing campaigns and scams. Without more scrutiny, future AI chatbots can also harbour the same risks.

So, Google Bard AI needs more due diligence and technical inspections before providing public access to ensure that they don’t repeat such grave errors.

For more insights and analysis, follow Code Red on Twitter and LinkedIn.